作者使用的是centos7、vmware16pro。文中的Java和hadoop安装包有需要的可以私信我

登录centos系统;进入etc/sysconfig/network-scripts中;编辑NAT网卡配置文件。vi ifcfg-ens33

配置相关变量

BOOTPROTO=static #关闭DHCP;可以不做;不影响

ONBOOT=yes #开机启动

IPADDR=192.168.253.5 #该虚拟机的ip地址

GATEWAY=192.168.253.2 #网关地址

DNS1=192.168.253.2 #DNS可根据自己网络进行配置

重启网卡;使网络配置生效

systemctl restart network

配置成功后;通过ping命令来检查网路是否配置成功

关闭防火墙;并关闭开机自启动

[root;master network-scripts]# systemctl stop firewalld.service

[root;master network-scripts]# systemctl disable firewalld.service

如果开启防火墙则无法在web端开启hadoop;并且无法实现文件的上传

配置时钟同步

[root;master network-scripts]# yum install ntpdate

[root;master ~]# ntpdate ntp.aliyun.com

5 Oct 17:13:32 ntpdate[2607]: adjust time server 203.107.6.88 offset -0.304230 sec

[root;master ~]# date

Wed Oct 5 17:13:38 CST 2022

修改主机名为master

[root;master ~]# hostnamectl set-hostname master

[root;master ~]# hostname

master

配置hosts列表

[root;master ~]# vi /etc/hosts

添加主机的ip以及主机名master 192.168.133.142 master

添加IP和主机名是为了让集群中的每台服务器都知道彼此的名称和IP

添加之后;我们可以通过ping master来ping通对应的IP

创建个人目录、java目录、hadoop目录

[root;master ~]# mkdir /usr/zj

[root;master ~]# mkdir /usr/java

[root;master ~]# mkdir /usr/hadoop

使用xFTP复制java安装包至/usr/zj下并且解压移动到/usr/java下

[root;master zj]# tar -zxvf jdk-8u241-linux-x64.tar.gz

[root;master zj]# mv jdk1.8.0_241 /usr/java/

安装java之后;配置系统文件

[root;master java]# vi /etc/profile

在最后添加两行配置

export JAVA_HOME=/usr/java/jdk1.8.0_241 export PATH=$JAVA_HOME/bin:$PATH

之后使配置生效并且查看java

[root;master java]# source /etc/profile

[root;master java]# java -version

java version ;1.8.0_241;

Java(TM) SE Runtime Environment (build 1.8.0_241-b07)

Java HoTSPot(TM) 64-Bit Server VM (build 25.241-b07, mixed mode)

使用xftp复制hadoop安装包至/usr/zj下并且解压移动到/usr/hadoop下

[root;master zj]# tar -zxvf hadoop-2.10.1.tar.gz

[root;master zj]# mv hadoop-2.10.1 /usr/hadoop/

修改系统配置文件;并是配置生效;再查看

[root;master zj]# vi /etc/profile

在最后添加两行

export HADOOP_HOME=/usr/hadoop/hadoop-2.10.0 export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

[root;master zj]# source /etc/profile

[root;master zj]# hadoop version

Hadoop 2.10.1

Subversion https://github.com/apache/hadoop -r 1827467c9a56f133025f28557bfc2c562d78e816

Compiled by centos on 2020-09-14T13:17Z

Compiled with protoc 2.5.0

From source with checksum 3114edef868f1f3824e7d0f68be03650

This command was run using /usr/hadoop/hadoop-2.10.1/share/hadoop/common/hadoop-common-2.10.1.jar

[root;master zj]# whereis hdfs

hdfs: /usr/hadoop/hadoop-2.10.1/bin/hdfs /usr/hadoop/hadoop-2.10.1/bin/hdfs.cmd

将hadoop与java绑定

[root;master zj]# cd /usr/hadoop/hadoop-2.10.1/etc/hadoop/

[root;master hadoop]# vi hadoop-env.sh

找到下面这行代码;

export JAVA_HOME=${JAVA_HOME}

将这行代码修改为

export JAVA_HOME=/usr/java/jdk1.8.0_241

进入/usr/hadoop/hadoop-2.10.1/etc/hadoop/配置core-site.xml文件

[root;master ~]#cd /usr/hadoop/hadoop-2.10.1/etc/hadoop/

[root;master hadoop]# vi core-site.xml

新增

<configuration>

<!--指定文件系统的入口地址;可以为主机名或ip -->

<!--端口号默认为8020 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:8020</value>

</property>

<!--指定hadoop的临时工作存目录-->

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/hadoop/tmp</value>

</property>

</configuration>

配置yarn-env.sh文件;找到该行;

export JAVA_HOME=/home/y/libexec/jdk1.6.0/

修改为;

export JAVA_HOME=/usr/java/jdk1.8.0_241

配置hdfs-site.xml文件

[root;master hadoop]# vi hdfs-site.xml

新增

<configuration>

<!--指定hdfs备份数量;小于等于从节点数目-->

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.http.address</name>

<value>master:50070</value>

</property>

<!-- 自定义hdfs中namenode的存储位置-->

<!-- <property>-->

<!-- <name>dfs.namenode.name.dir</name>-->

<!-- <value>file:/usr/hadoop/dfs/name</value>-->

<!-- </property>-->

<!-- 自定义hdfs中datanode的存储位置-->

<!-- <property>-->

<!-- <name>dfs.datanode.data.dir</name>-->

<!-- <value>file:/usr/hadoop/dfs/data</value>-->

<!--</property>-->

</configuration>

cp命令改名;将mapred-site.xml.template改成不带后缀的mapred-site.xml;然后配置mapred-site.xml文件

[root;master hadoop]# cp mapred-site.xml.template mapred-site.xml

[root;master hadoop]# vi mapred-site.xml

新增

<configuration>

<!--hadoop的MapReduce程序运行在YARN上-->

<!--默认值为local-->

<property>

<name>mapreduce.Framework.name</name>

<value>yarn</value>

</property>

</configuration>

配置yarn-site.xml文件

[root;master hadoop]# vi yarn-site.xml

新增

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<!--nomenodeManager获取数据的方式是shuffle-->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

修改slaves文件;重新键入三个虚拟机的hostname

slave1

slave2

slave3

克隆三台slave机;分别修改主机名slave1、slave2、slave3

进入etc/sysconfig/network-scripts文件;修改IP分别为;192.168.133.143、192.168.133.144、192.168.133.145

修改hosts文件

[root;master~]# vi /etc/hosts

修改为;

192.168.253.5 master

192.168.253.6 slave1

192.168.253.7 slave2

192.168.253.8 slave3

在每台虚拟机上输入以下命令ssh-keygen -t rsa

发送公钥

[root;master .ssh]# cat id_rsa.pub >> authorized_keys

[root;master .ssh]# chmod 644 authorized_keys

[root;master .ssh]# systemctl restart sshd.service

[root;master .ssh]# scp /root/.ssh/authorized_keys slave2:/root/.ssh

[root;master .ssh]# scp /root/.ssh/authorized_keys slave3:/root/.ssh

[root;master .ssh]# scp /root/.ssh/authorized_keys slave1:/root/.ssh

ssh登陆检验

[root;master .ssh]# ssh master

The authenticity of host ;master (192.168.133.142); can;t be established.

ECDSA key fingerprint is SHA256:2Bffpg/A1;5pIpz1wxrvrtDAOWhygRaJnuRbywSEmOQ.

ECDSA key fingerprint is MD5:48:5d:59:ae:19:95:3d:88:4d:3d:56:46:0d:ff:fe:4a.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added ;master,192.168.133.142; (ECDSA) to the list of known hosts.

Last login: Wed Oct 5 18:51:56 2022 from 192.168.133.156

[root;master ~]# ssh slave1

Last login: Wed Oct 5 18:53:23 2022 from 192.168.133.156

[root;slave1 ~]# exit

logout

Connection to slave1 closed.

[root;master ~]# ssh slave2

Last login: Wed Oct 5 18:53:25 2022 from 192.168.133.156

[root;slave2 ~]# exit

logout

Connection to slave2 closed.

[root;master ~]# ssh slave3

Last login: Wed Oct 5 18:52:07 2022 from 192.168.133.156

[root;slave3 ~]# exit

logout

Connection to slave3 closed.

格式化HDFS

[root;master ~]#cd /usr/hadoop/hadoop-2.10.1/bin

[root;master bin]# hdfs namenode -format

启动

start-all.sh

jps查看

[root;master bin]# jps

19301 Jps

1626 NameNode

1978 ResourceManager

1821 SecondaryNameNode

查看 SecondaryNameNode 信息;192.168.133.142:50090

查看 SecondaryNameNode 信息;192.168.133.142:50090

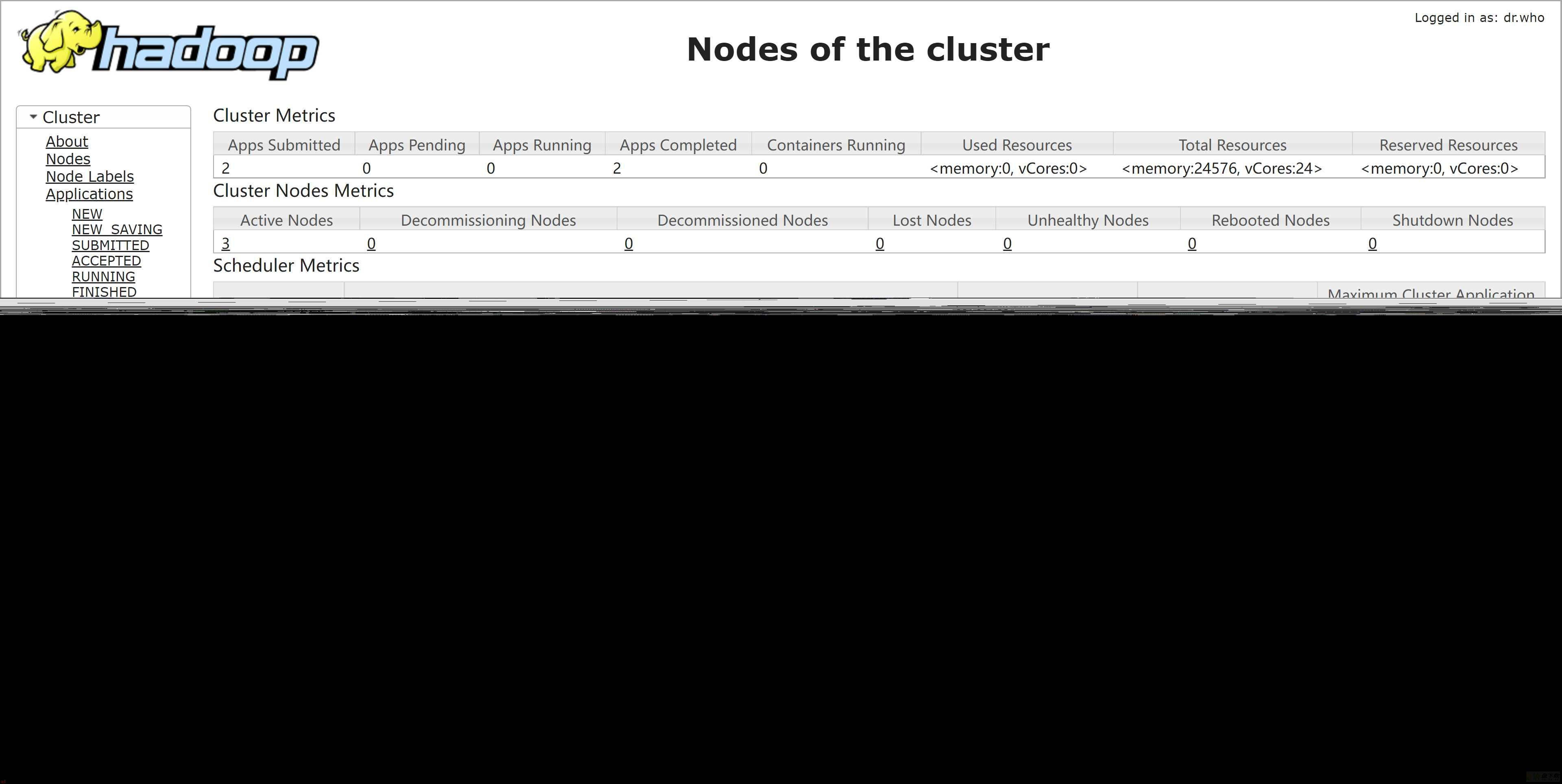

查看 YARN 界面;192.168.133.142:8088

查看 YARN 界面;192.168.133.142:8088

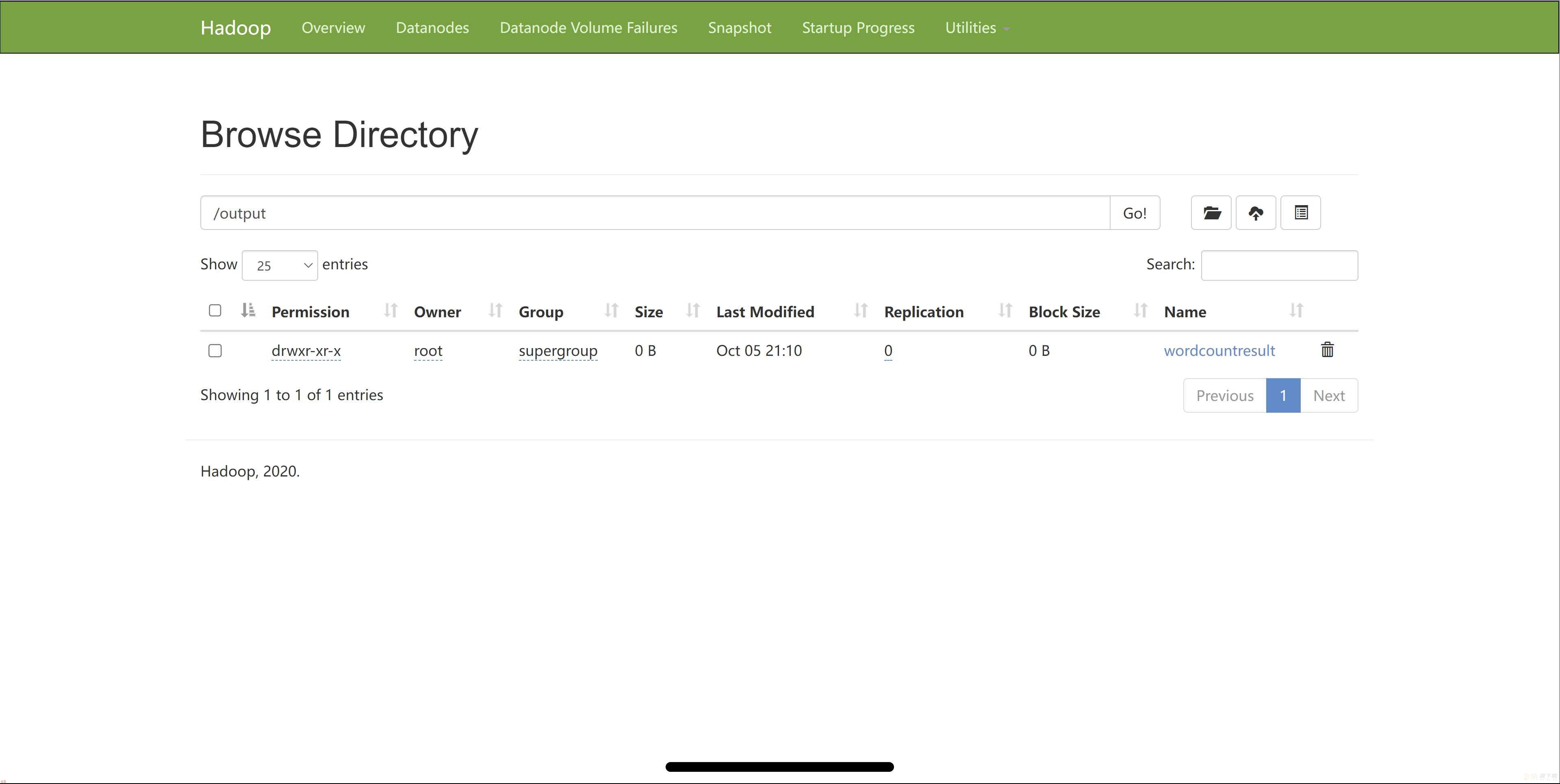

创建输入输出路径以及上传的文件;

[root;master hadoop-2.10.1]# hadoop fs -mkdir -p /data/wordcount

[root;master hadoop-2.10.1]# hadoop fs -mkdir -p /output/

[root;master hadoop-2.10.1]# vi /usr/inputword

[root;master bin]# cat /usr/inputword

hello world

hello hadoop

hello hdfs

hello test

将本地准备的输入文件上传到hdfs文件中

[root;master hadoop-2.10.1]# hadoop fs -put /usr/inputword /data/wordcount

[root;master hadoop-2.10.1]# bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.10.1.jar wordcount /data/wordcount /output/wordcountresult

[root;master hadoop-2.10.1]# hadoop fs -text /output/wordcountresult/part-r-00000

hadoop 1

hdfs 1

hello 4

test 1

world 1

wordcount官方格式

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.10.0.jar wordcount wcinput wcoutput

wordcount;案例名称

wcinput;输入文件夹

wcoutput;输出文件夹